“It takes 20 years to build a reputation and a few minutes of a cyber-incident to ruin it.”

― Stephane Nappo

Last year was a year of statistics. We all got very used to reading and watching the news and being bombarded with facts and figures and graphs and numbers from the get-go. So, here’s another one: 18,362. That’s the number of CVEs the NVD database published in 2020. That’s higher than in previous years and looks certain to keep rising. One consequence is that most are struggling to control the large volume of software vulnerabilities across their entire IT systems, and resources are simply not available to fix these issues efficiently and effectively.

Despite the scale of the problem being well recognised, few organisations have developed consistent, measurable, and insightful risk reporting that identifies the vulnerability risk profile across the complete attack surface of their business. Unless such reporting is implemented, it is impossible for the Board and senior leadership of an organisation to:

- Establish whether they are operating within risk appetite.

- Assess the effectiveness of their vulnerability management strategies.

- Understand the investment in vulnerability remediation and assess whether the return on investment is proportionate.

- Demonstrate to key stakeholders that the internal control and reporting framework is appropriate relative to the residual information security risks that exist.

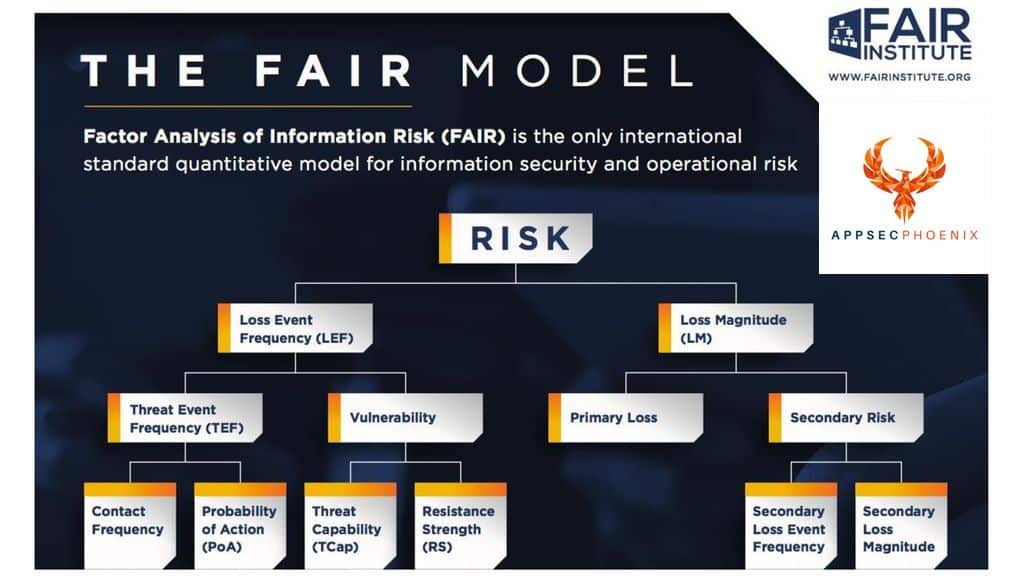

At AppSec Phoenix we have used the Factor Analysis of Information Risk (FAIR) framework to develop meaningful and quantifiable reporting on vulnerability risk. We have started using the FAIR approach defining the loss magnitude ontology:

<Risk> = <Threat Event Frequency> x <Vulnerability> x <Loss Magnitude>

Threat Event Frequency, as the name would suggest, is a function of Contact Frequency and Probability of Action. Until recently the existence of an exploit in the wild was the most relevant proxy for assessing the probability that an exposed vulnerability can be used to compromise a firm’s network. However, as more data is produced, predictive machine learning is starting to be used to identify which vulnerabilities are most likely to be exploited in the future.

The CVSS score is the industry standard for measuring the severity of a Vulnerability. We have introduced a new parameter in our risk engine, Vulnerability Density, which identifies clusters of vulnerabilities at the component level, which exceeds the risk threshold of the organisation. Loss Magnitude is evaluated by measuring both Primary Risk (the loss that materialises directly from the event) as well as the Secondary Risk (the potential for secondary stakeholder reaction to the primary event, so things like regulatory sanction, for example).

The risk assessment starts with each vulnerability and is then aggregated at all levels and consolidated to report on the entire organisation. The benefits of this systematic and objective risk reporting go beyond protecting an organisation against loss. Our model also improves the working relationship between the Information Risk and Software Development teams and consequently:

- There is a reference point which facilitates a constructive and objective discussion between the business and technology teams.

- Measurable KPIs can be agreed.

- Management can measure ‘cost to fix’ against the risk mitigation achieved and calculate the return on investment.

- There is increased visibility on the true costs of maintaining a cyber secure application architecture.

Governance forums also need to be presented with aggregated reporting across the organisation as well as heat maps showing the risk profile down to sub-component level to highlight any ‘hot-spots. This holistic, data-driven approach application security risk management is now a prerequisite for all organisations to enable them to honour their obligations to stakeholders, improve operational performance and, ultimately, boost their bottom line.

For more information, please contact us at AppSec Phoenix!